Neuroengineering/AugmentedReality

Introduction

Brain-Machine Interfaces

Optogenetic Mapping: Neurotechnology Renaissance

Ubiquious Computing and Augmented Reality

The Affective Turn: Emotional Branding, Neuromarketing, and the New, New Media

Concluding Thoughts

Ubiquitous Computing and Augmented Reality

The discussion thus far has centered on brain-machine interfaces and future imagined brain coprocessors as therapeutic, rehabilitative tools and devices for brain reading and mind control for augmenting human mental abilities through fairly invasive surgical means. But some of the features of these imagined brain coprocessors may already be silently being installed through non-surgically invasive means. In the next sections I want to explore developments from the fields of ubiquitous computing, social media and marketing in progress that for all practical purposes are neurotechnologies of the future.

The infrastructure of ubiquitous computing envisioned two decades ago by Mark Weiser and John Seely Brown offers the nutrient matrix for posthuman extended minds proposed by Andy Clark and the collective paraselves fantasized by Nicolelis’s Brain-Machine Interfaces and theorized beautifully in Brian Rotman’s discussion of paraselves. (Weiser: 1991, 1994; Weiser and Brown: 1996; Clark, 2004; Clark, 2010; Rotman, 2008): namely, a world in which computation would disappear from the desktop and merge with the objects and surfaces of our ambient environment. (Greenfield: 2006) Rather than taking work to a desktop computer, many tiny computing devices would be spread throughout the environment, in computationally enhanced walls, floors, pens and desks seamlessly integrated into everyday life. We are still far from realizing Weiser’s vision of computing for the twenty-first century. Apart from the fact that nearly every piece of technology we use has one or more processors in it, we are far from reaching the transition point to ubiquitous computing when the majority of those processors are networked and addressable. But we are getting there. There have already been a number of milestones along the road to ubiquitous computing. Inspired by efforts from 1989-1995 at Olivetti and Xerox PARC to develop invisible interfaces interlinking coworkers with electronic badges and early RFID tags (Want, 1992,1995,1999), the Hewlett Packard Cooltown project (2000-2005) offered a prototype architecture for linking everyday physical objects to Web pages by tagging them with infrared beacons, RFID tags, and bar codes. Users carrying PDAs, tablets, and other mobile devices could read those tags to view Web pages about the world around them and engage services, such as printers, radios, automatic call forwarding and continually updated maps for finding like-minded colleagues in locations such as conference settings. (Barton, 2001; Kindberg, 2002)

While systematically constructed ubiquitous cities based on the Cooltown model have yet to take hold, many of the enabling features of ubiquitous computing environments are arising in ad hoc fashion fuelled primarily by growing mass consumption worldwide of social networking applications and the wildly popular new generation smart phones with advanced computing capabilities, cameras, accelerometers, and a variety of readers and sensors. In response to this trend and building on a decade of Japanese experience with Quick Response (QR) barcodes, in December 2009 Google dispatched approximately 200,000 stickers with bar codes for the windows of its “Favorite Places” in the US, so that people can use their smart phones to find out about them. Besides such consumer-oriented uses, companies like Wal-Mart and other global retailers now routinely use RFID tags to manage industrial supply chains. These practices are now indispensable for hospital and other medical environments. Such examples are the tip of the iceberg of increasingly pervasive computing applications for the masses. Consumer demand for electronically mediated pervasive “brand zones” such as Apple Stores, Prada Epicenters, and the interior of your BMW where movement, symbols, sound, and smell all reinforce the brand message turning shopping spaces/driving experiences into engineered synesthetic environments are powerful aphrodisiacs for pervasive computing.

Even these pathbreaking developments fall short of Weiser’s vision which was to engage multiple computational devices and systems simultaneously during ordinary activities without having to interact with a computer through mouse, keyboard and desktop monitor and without necessarily being aware of doing so. In the years since these first experimental systems rapid advances have taken place in mobile computing, including: new smart materials capable of supporting small, lightweight, wearable mobile cameras and communications devices; many varieties of sensor technologies; RFID tags; physical storage on “motes” or “mu-chips”, such as HP’s Memory Spot system which permits storage of large media files on tiny chips instantly accessible by a PDA (McDonnell, 2010); Bluetooth; numerous sorts of GIS applications for location logging (eg., Sony’s PlaceEngine and LifeTagging system); wearable biometric sensors (eg., BodyMedia, SenseWear). To realize Weiser’s vision though, we must further augment these sorts of breakthroughs by getting the attention-grabbing gadgets, smart phones and tablets out of our hands and begin interacting within computer-mediated environments the way we normally do with other persons and things. Here, too, recent advancements have been enormous, particularly advances in gesture and voice recognition technologies coupled with new forms of tangible interface and information displays. (Rekimoto, 2008)

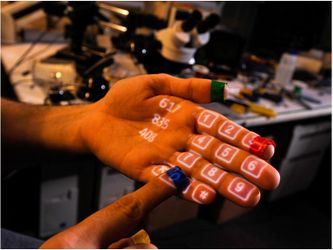

Two prominent examples are the stunning gesture recognition capabilities in the Microsoft Kinect system for the Xbox, which dispenses with a game controller altogether in favor of gesture recognition as game interface and the EPOC headset brain controller system from Emotive Systems. But for our purposes in exploring some of the current routes to neuromarketing and the emergence of a brain coprocessor, the SixthSense prototype developed by Pranav Mistry and Pattie Maes at MIT points even more dramatically to an untethered fusion of the virtual and the real central to Weiser’s vision. (Mistry, 2009) The SixthSense prototype comprises a pocket projector, a mirror and a camera built into a small mobile wearable device. Both the projector and the camera are connected to a mobile computing device in the user’s pocket. The camera recognizes objects instantly, with the micro-projector overlaying the information on any surface, including the object itself or the user’s hand. Then the user can access or manipulate the information using his/her fingers. The movements and arrangements of markers on the user’s hands and fingers are interpreted into gestures that activate instructions for a wide variety of applications projected as application interfaces—search, video, social networking, basically the entire Web. SixthSense also supports multi-touch and multi-user interaction.

Figure 1: Pranav Mistry and Pattie Maes, SixthSense. The system comprises a pocket projector, a mirror and a camera built into a wearable device connected to a mobile computing platform in the user’s pocket. The camera recognizes objects instantly, with the micro-projector overlaying the information on any surface, including the object itself or the user’s hand. (Photos courtesy of Pranav Mistry)

a. Active phone keyboard overlayed on user’s hand. b. Camera recognizes flight coupon and projects departure update on the ticket c. Camera recognizes news story from web and streams video to the page.

Thus far we have emphasized technologies that are enabling the rise of pervasive computing, but ‘ubiquitous computing’ not only denotes a technical thrust; it is equally a socio-cultural formation, an imaginary and a source of desire. From our perspective its power becomes transformative in permeating the affective domain, the machinic unconscious. Perhaps the most significant development driving this reconfiguration of affect are the phenomena of social networking and the use of “smart phones.” More people are not only spending more time online; they are seeking to do it together with other wired “friends.” Surveys by the Pew Internet & American Life Project report that between 2005-2008 use of social networking sites by online American adults 18 and older quadrupled from 8% to 46% and that 65% of teens 12-17 used social networking sites like Facebook, MySpace, or LinkedIn. The Neilsen Company reports that 22 % of all time spent online is devoted to social network sites. (NeilsenWire, June 15) Moreover, the new internet generation wants to connect up in order to share: the Pew Internet & American Life Project has found that 64% of online teens ages 12-17 have participated in a wide range of content-creating and sharing activities on the internet, 39% of online teens share their own artistic creations online, such as artwork, photos, stories, or videos, while 26% remix content they find online into their own creations.(Lenhart, 2010, “Social Media”) The desire to share is not limited to text and video, but is extending to data-sharing of all sorts. Sleep, exercise, sex, food, mood, location, alertness, productivity, even spiritual well-being are being tracked and measured, shared and displayed. On MedHelp, one of the largest Internet forums for health information, more than 30,000 new personal tracking projects are started by users every month. Foursquare, a geo-tracking application with about one million users, keeps a running tally of how many times players “check in” at every locale, automatically building a detailed diary of movements and habits; many users publish these data widely. (Wolf, 2010) Indeed, 60% of internet users are not concerned about the amount of information available about them online, and 61% of online adults do not take steps to limit that information. Just 38% say they have taken steps to limit the amount of online information that is available about them. (Madden: 2007, 4) As Kevin Kelly points out we are witnessing a feedback loop between new technologies and the creation of desire. The explosive development of mobile, wireless communications, widespread use of RFID tags, Bluetooth, embedded sensors, QR addressing, applications like Shazam for snatching a link and downloading music in your ambient environment, GIS applications of all sorts, social phones such as numerous types of Android phones and the iPhone4 that emphasize social networking are creating desire for open sharing, collaboration, even communalism, and above all a new kind of mind. (Kelly: 2009a, 2009b) [See Figure 2: a, b, c]

Figure 2: 3D mapping, location aware applications, and augmented reality browsers.

(Photos courtesy of Earthmine.com and Layer.com)

a. Earthmine attaches location aware apps (in this case streaming video) to specific real-world locations. b. Earthmine enables 3D objects to be overlaid on specific locations. c. Layar augmented reality browser overlays information, graphics, and animation on specific locations.

(More: The Affective Turn)

References

Clark, Andy. "Is Language Special? Some Remarks on Control, Coding, and Co-Ordination." Language Sciences 26, no. 6 (2004): 717-26.

Clark, Andy. Supersizing the Mind: Embodiment, Action, and Cognitive Extension. New York: Oxford University Press, 2010.

Rotman, Brian. Becoming Beside Ourselves: The Alphabet, Ghosts, and Distributed Human Being. Durham: Duke University Press, 2008.

Weiser, Mark. "The Computer for the Twenty-First Century." Scientific American 265, no. 3 (1991): 94-104.

———. "Creating the Invisible Interface. ." In Keynote Presentation of the ACM, Symposium User Interface Software and Technology UIST'94. Marina Del Rey, Calif, 1994.

Weiser, Mark, and John Seely Brown. "Designing Calm Technology." Power Grid Journal 1, no. 1 (1996).

Barton, John, Tim Kindberg. "The Cooltown User Experience." Hewlet Packard, 2001.

Kindberg, Tim, John Barton, et al. "People, Places, Things: Web Presence for the Real World." Mobile Networks and Applications 7 (2002): 365-76.

Want, Roy, Andy Hopper, Veronica Falcao, and Jonathan Gibbons. "The Active Badge Location System." ACM Transactions Information Systems 10, no. 1 (1992): 91-102.

Want, Roy, Kenneth P. Fishkin, Anuj Gujar, and Beverly L. Harrison. "Bridging Physical and Virtual Worlds with Electronic Tags." Proceedings of the ACM Conference Human Factors in Computing Systems CHI'99, (Pittsburgh), 1999.

Want, Roy. Bill N. Schilit, Norman I. Adams, Rich Gold, Karin Petersen, David Goldberg, John R. Ellis, and Mark Weiser. "An Overview of the Parctab Ubiquitous Computing Experiment." Personal Communications, IEEE 2, no. 6 (1995): 28-43.

Greenfield, Adam. Everyware: The Dawning Age of Ubiquitous Computing. Berkeley, CA: New Riders, 2006.

Kelly, Kevin. "A New Kind of Mind." The Edge, January 2009.

———. "The New Socialism: Global Collectivist Society Is Coming Online." Wired Magazine 17, no. 06 (2009).

Lenhart, Amanda , Kristen Purcell, Aaron Smith and Kathryn Zickuhr. "Social Media & Mobile Internet Use among Teens and Young Adults " http://pewinternet.org/Reports/2010/Social_Media_and_Young_Adults.aspx.

Madden, Mary, Susannah Fox, Aaron Smith, and Jessica Vitak. "Digital Footprints." http://www.pewinternet.org/Reports/2007/Digital-Footprints.aspx?r=1.

McDonnell, J.T. Edward, John Waters, Weng Wah Loh, Robert Castle, Fraser Dickin, Helen Balinsky, and Keir Shepherd. "Memory Spot: A Labeling Technology." Pervasive Computing, IEEE 9, no. 2 (2010): 11-17.

Mistry, Pranav and Pattie Maes. "Sixthsense: A Wearable Gestural Interface." In ACM SIGGRAPH ASIA 2009 Sketches. Yokohama, Japan: ACM, 2009.

NeilsenWire. "Americans Using TV and Internet Together 35% More Than a Year Ago " http://blog.nielsen.com/nielsenwire/online_mobile/three-screen-report-q409/.

———. "Social Networks/Blogs Now Account for One in Every Four and a Half Minutes Online." http://blog.nielsen.com/nielsenwire/global/social-media-accounts-for-22-percent-of-time-online/.

Rekimoto, Jun, Ken Iwasaki, and Takashi Miyaki. "Affectphone: A Handset Device to Present User’s Emotional State with Warmth/Coolness." Paper presented at the B-Interface Workshop at BIOSTEC2010(International Joint Conference on Biomedial Engineering Systems and Technologies), 2010.Online at: lab.rekimoto.org/projects/affectphone/

Rekimoto, Jun. "Aided Eyes: Eye Activity Sensing for Daily Life." Sony Labs Open House 2010. Online at: lab.rekimoto.org/projects/aidedeyes/

Rekimoto, Jun, Takashi Miyaki, and Takaaki Ishizawa. "Lifetag: Wifi-Based Continuous Location Logging for Life Pattern Analysis." 3rd International Symposium on Location- and Context-Awareness (2007): 35-49. Online at: lab.rekimoto.org/projects/lifetag/

Rekimoto, Jun. "Organic Interaction Technologies: From Stone to Skin." Communications of the ACM 51, no. 6 (2008): 38-44.

Rekimoto, Jun, Atsushi Shionozaki, Takahiko Sueyoshi, and Takashi Miyaki. "Placeengine: A Wifi Location Platform Based on Realworld-Folksonomy." Internet Conference 2006 (2006): 95-104. Online at: lab.rekimoto.org/projects/placeengine/

Wolf, Gary "The Data Driven Life." New York Times, Section MM38 of the Sunday Magazine, April 26 (Online) 2010.